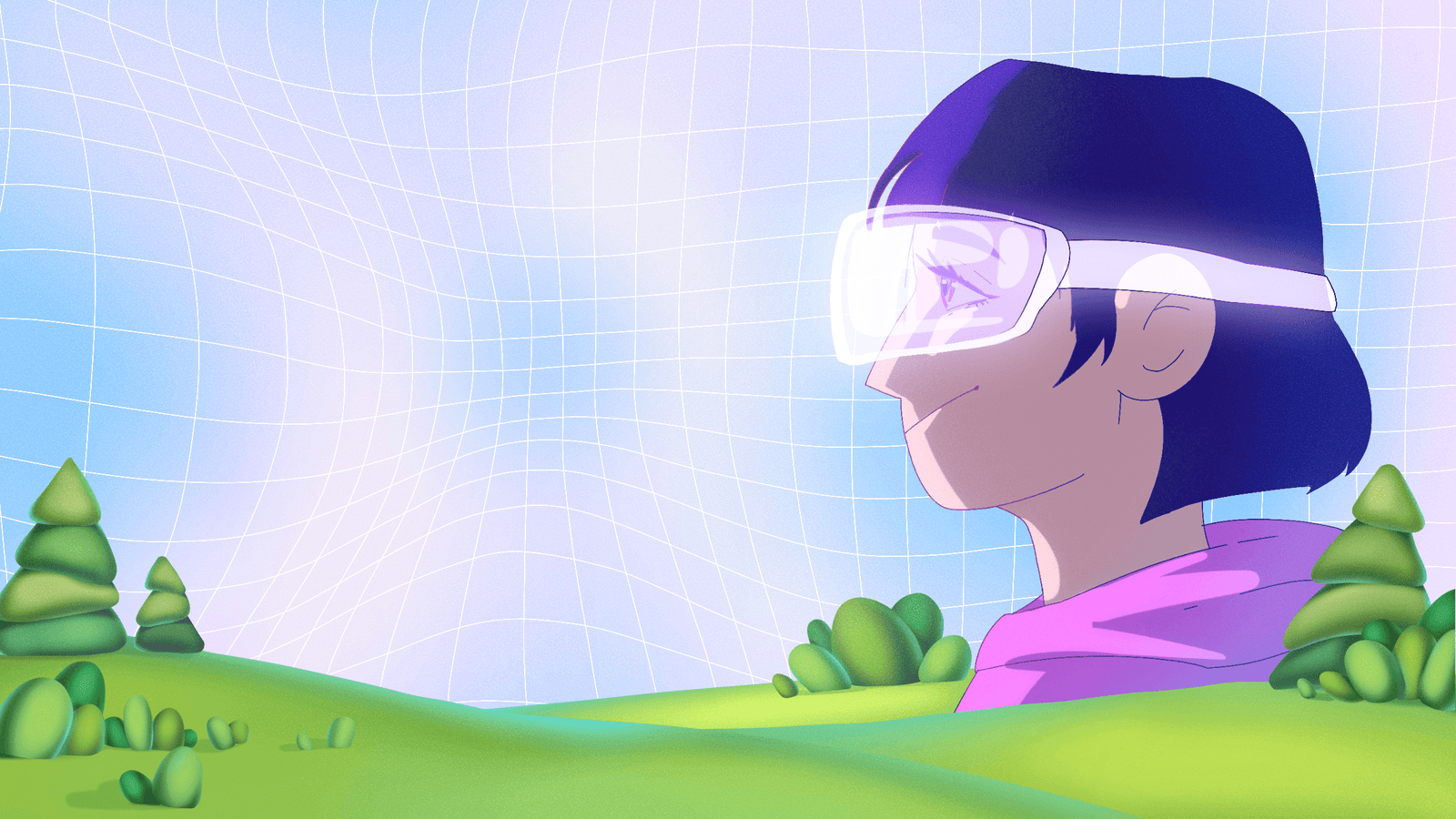

Understanding 3D Animation in VR & AR

Before diving into production, it’s helpful to clarify what 3D animation means within VR (virtual reality) and AR (augmented reality). In VR, the user is immersed in a fully 3D environment. Everything you animate – worlds, characters, objects – helps shape the sense of presence.

In AR, the user still occupies the real world, and animated 3D elements appear layered or anchored within it. The animation must feel like it belongs to that environment. The real challenge isn’t only how to make things move, but how to make them react – responding to gaze, gestures, or actions in real time.

Why it matters:

- Immersion: Animation, lighting, and physics build realism and presence.

- Engagement: Interactivity keeps the user active rather than passive.

- Versatility: From product demos to education and entertainment, 3D animation fits every field when designed smartly for its platform.

Choosing the Right 3D Modeling & Animation Tools

To create interactive and believable VR/AR experiences, your toolkit matters as much as your concept. Here are the most popular and powerful tools that help bring ideas to life:

Blender

An open-source powerhouse for 3D modeling, rigging, and animation. Blender is ideal for independent studios and creators who need a robust pipeline for both cinematic animation and real-time rendering. Its built-in Eevee and Cycles render engines provide flexibility for both stylized and realistic visuals.

Autodesk Maya

A long-standing industry standard used across film, games, and interactive media. Maya’s precision and advanced rigging, dynamics, and character-animation tools make it perfect for high-end 3D production that later transitions into VR/AR environments.

These are the two most popular real-time engines used to deploy 3D animation in VR/AR. Unreal Engine shines for photorealism, real-time lighting, and cinematic control – great for architectural VR, storytelling, and high-end visualization. Unity stands out for cross-platform AR development and mobile performance, making it a go-to choice for interactive apps and educational simulations.

Perfect for lighter, mobile-focused AR experiences. Adobe Aero enables artists to create immersive AR scenes without coding, while Spark AR powers social-media filters and branded AR campaigns that can reach millions of users quickly.

Pro tip: Many professional pipelines combine these tools – modeling in Blender or Maya, then importing assets into Unreal Engine or Unity for interactivity. The goal is to balance creative freedom with real-time performance.

Key Steps to Creating Interactive 3D Animation for VR & AR

Here’s a roadmap to follow when building an interactive experience – friendly for animation studio workflows.

- A. Pre-production: Concept + Storyboard

Define the experience: is it a VR simulation, an AR product configuration, or an immersive story? Map the user’s journey – what they see, do, and trigger. Storyboards should visualize interactions as much as visuals.

- B. Asset Creation: 3D Models, Animation, Textures

Build optimized 3D models suited to your platform (high-poly for powerful headsets, low-poly for mobile AR). Animate responses to user actions – grabbing, pushing, or gazing – and perfect your textures and lighting for realism.

- C. Interactivity & Spatial Design

Decide how users move and interact: teleportation, hand tracking, or controller navigation. Ensure 3D elements respect depth, occlusion, and spatial consistency to feel naturally grounded.

- D. Performance & Optimization

Keep frame rates high (ideally 90 fps for VR). Optimize models, bake lighting where possible, and tailor your quality levels per device.

- E. Testing, Iteration & Deployment

Test with real users, refine pacing and interaction, and optimize for comfort before deploying across platforms.

Key Features That Make Interactive 3D Animation Work

- Realism: believable textures, lighting, and physics.

- Interactivity: responsive animation tied to user input.

- Spatial awareness: consistent perspective and depth.

- Performance: smooth, lag-free rendering for immersion.

- Cross-platform design: adaptability between VR and AR devices.

Challenges & Best Practices

Challenges: motion sickness, interaction complexity, and balancing fidelity with performance.

Best Practices: use intuitive controls, test early, and prioritize user comfort over visual excess.

As hardware evolves and AI enters creative pipelines, we’ll see more dynamic, user-driven animation and cross-reality continuity – experiences that adapt in real time to user behavior and context.